Jupyter Notebooks are a shell replacement

An attempt to provide another measure for the decision when to use Jupyter notebooks – and when not.

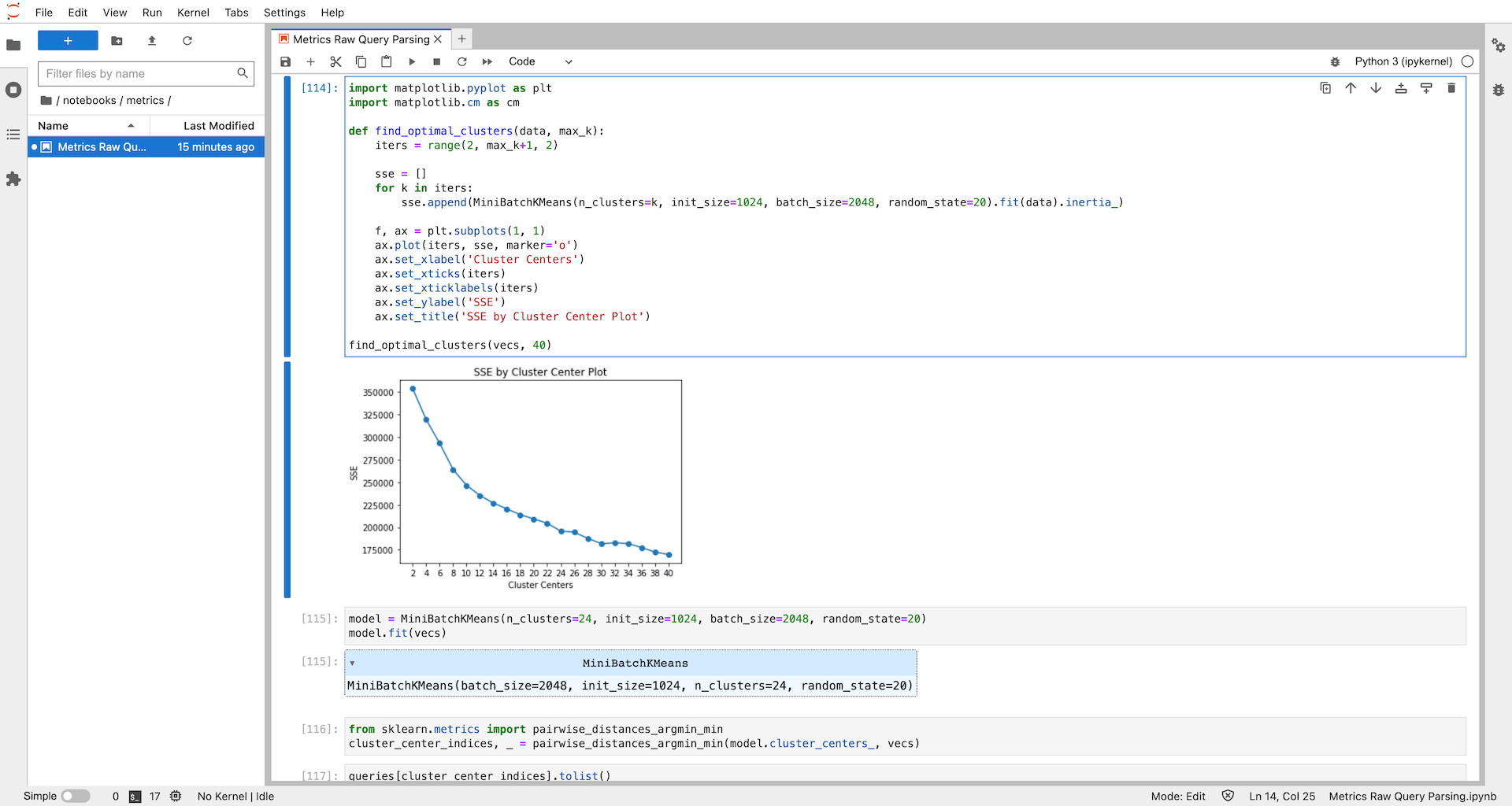

Jupyter notebooks and the like have become the go-to tool for any data-related tasks in Python. There are good reasons for that: Notebooks allow interactive coding and data crunching, can display any visualization that can be rendered in HTML, and, as a result, are great at showcasing experiments. If you don't know notebooks, make sure to try JupyterLab for the next experiment you conduct. It doesn't need to be Python and doesn't need to be data-related – you may for example want to experiment with a new API or with regular expressions for a specific problem.

There are many great articles and blog posts already on the pros and cons of notebooks for different use cases and this post is not supposed to be just another one. For anyone interested, I recommend these for an overview:

- Towards Data Science – Why Jupyter Notebooks aren't all that bad (friendly warning: Medium member-only story)

- Google Cloud – Jupyter Notebook Manifesto

- Martin Fowler's Blog – Don't put data science notebooks into production

- MLOps.community – Jupyter Notebooks In Production?

Instead, I would like to contribute explicitly to the discussion on Jupyter notebooks "in production". A consensus seems to be forming that notebooks are great for prototyping and experimentation, but should not be used "in production". To make it short, here are the main arguments for the latter:

- Since notebooks are just JSON files containing all input, output, and metadata, version control and especially diffs and merges are complicated.

- Cells can be executed independently while sharing the same interpreter (and thus sharing a state including variables). If a notebook is not tidied during/after experimentation to be executable from top to bottom, reproducibility is an issue.

- Production code should be tested. However, there is no native concept of unit tests in Jupyter notebooks.

- Modularization is not an intended feature of notebooks.

Nevertheless, the amount and activity of solutions for automated execution, testing, version control and even library development with notebooks show that they are in fact used widely for use cases that some may call "production". Part of the explanation for this discrepancy of opinions regarding the right use of notebooks surely is that there is a broad range of definitions for "production": For some, it may be any code executed in an automated fashion in some (maybe even internal) business process, others would see only code directly executed by users as production code. This is why I want to add another, simple measure to the discussion on when to use Jupyter notebooks. It has been quite helpful for me as it can usually be determined easily and helps us at LeanIX to share a clear, common guideline:

Use Notebooks as a better REPL shell, not as a poorer IDE.

REPL Shells are interactive code environments, which is usually what you want when experimenting with data, APIs, or anything code-related. However, experiments in the shell have 2 major flaws which are solved by notebooks:

- The shell is not persistent. As soon as you close the shell, any results are lost for good. Notebooks allow not only to save the inputs and outputs but even allow to arrange code cells and add Markdown descriptions.

- The shell doesn't allow rich visualizations. Notebooks, browser-based by nature, can not only display static images (such as matplotlib plots) but even interactive HTML/JS visualizations.

Both points combined make notebooks the best-in-class solution to conduct experiments with data and share the results for us at LeanIX. This is where notebook development should end though according to the above guideline. Multiple engineers working on the same code in a manner that requires diffs and merges of notebooks is a yellow flag already. Eventually, as soon as we start executing code in an automated fashion, it's a task for IDEs and Python scripts.

Footnote

1. Probably I'm not really the first one bringing up this distinction. ↑

Published by Michael Tannenbaum

Visit author page